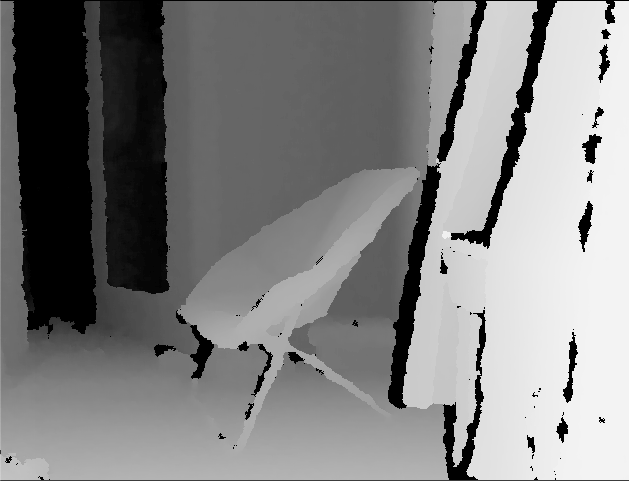

Snapshot is a Kinect-based project that displays a composite image of what was captured over time through depth. Each layer that builds up the image contains the outline of objects captured at different depth range at different time. The layers are organized by time: The oldest capture is at the background and the newest capture is at the foreground.

Nov 22 - Dec 6,2022

(2 weeks)

openFrameworks

Kinect

Thermal Printer

Inspiration

Extending the from Travel Over Time

project, I wanted to further find a way to capture a location over time.

Depending on the time of the day, the same location may be empty or extremely crowded.

Sometimes an unexpected passes by the location, but it would be only seen at that specific time.

Wondering what the output would look like if each depth layer is the capture of the same location from different point of time,

I developed a program that separately saves the kinect input image by depth range and compiles randomly selected images per layer

from the past in a chronological order.

Process

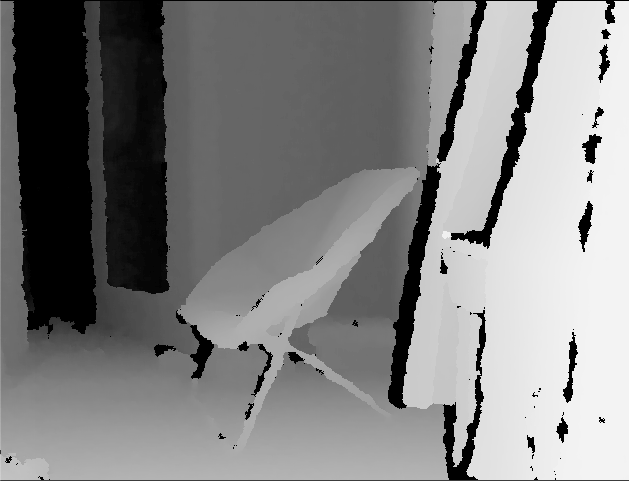

I first used Kinect to get the depth data of the scene

Then I created point cloud with the depth information as z

I then divided the points into 6 buckets based on their depth range with HSB color value, which is also based on depth

I also created triangular mesh out of points per bucket

I wrote a function that would automatically save the data per layer every certain time interval (e.g. every minute, every 10 seconds, etc.)

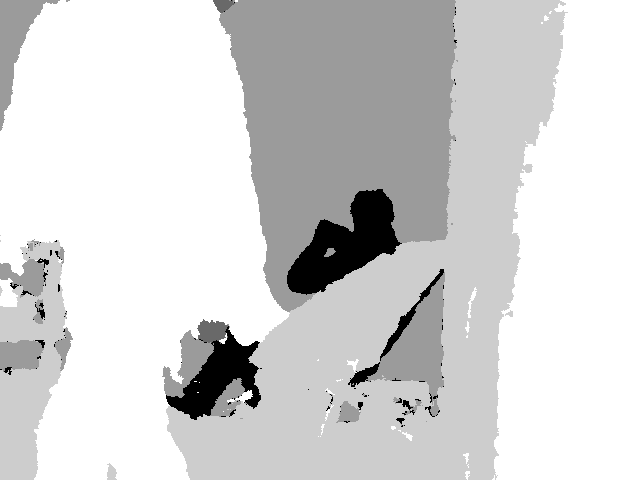

These are different views of Kinect:

(Starting with top left and clock-wise: depth data, RGB data, object outlines, compiled depth buckets in grayscale, individual bucket in grayscale)

How It works

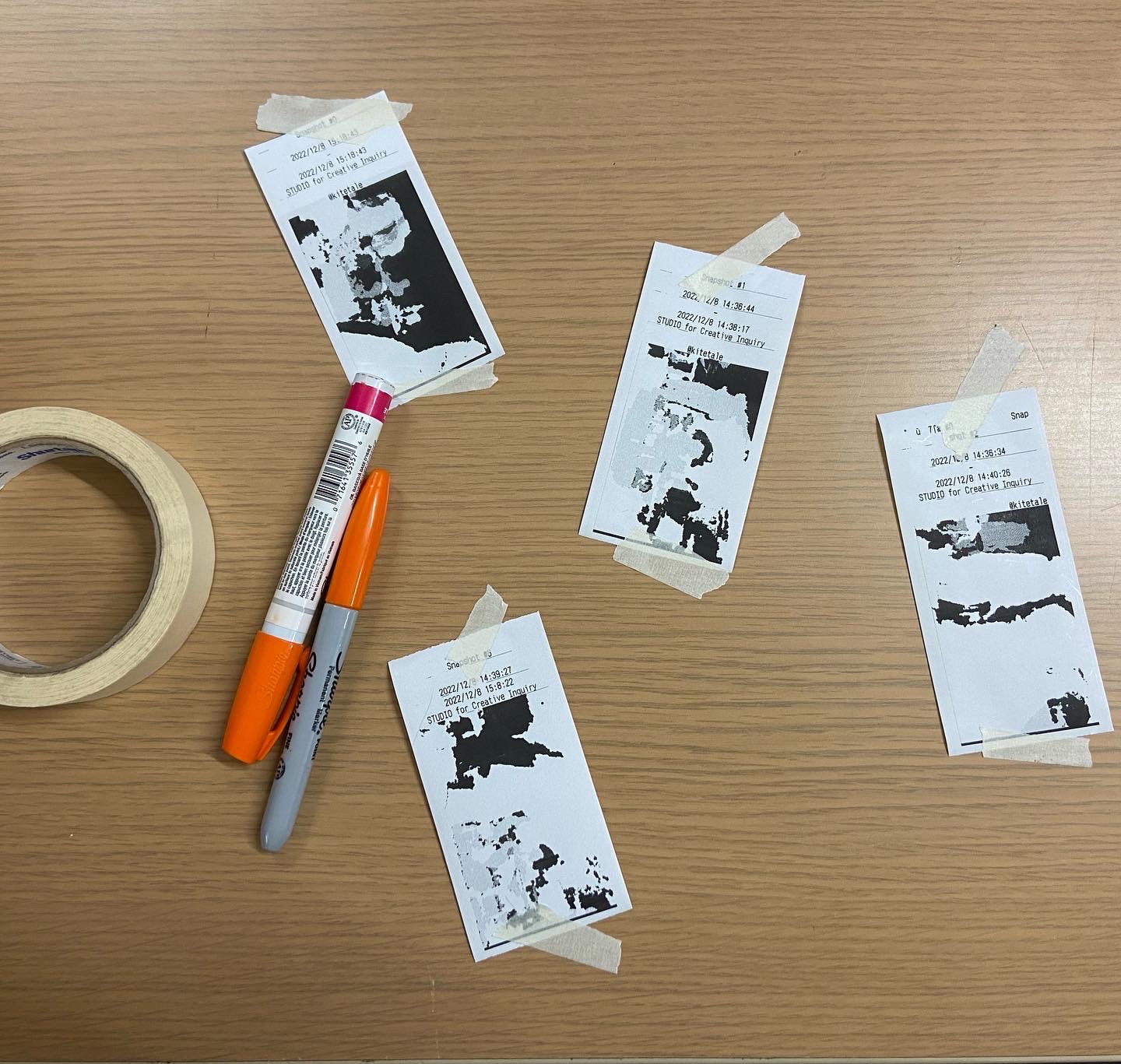

Upon pressing a button to capture, the program generates 6 random numbers and sorts them in order to pull captured layers in chronological order. It then combines the layers all together by taking the biggest pixel value across 6 layer images. Once the image is constructed, it frames in a polaroid template with location and timeframe (also saved and retrieved in the same manner as the layers) below the image.

Exhibition Installation